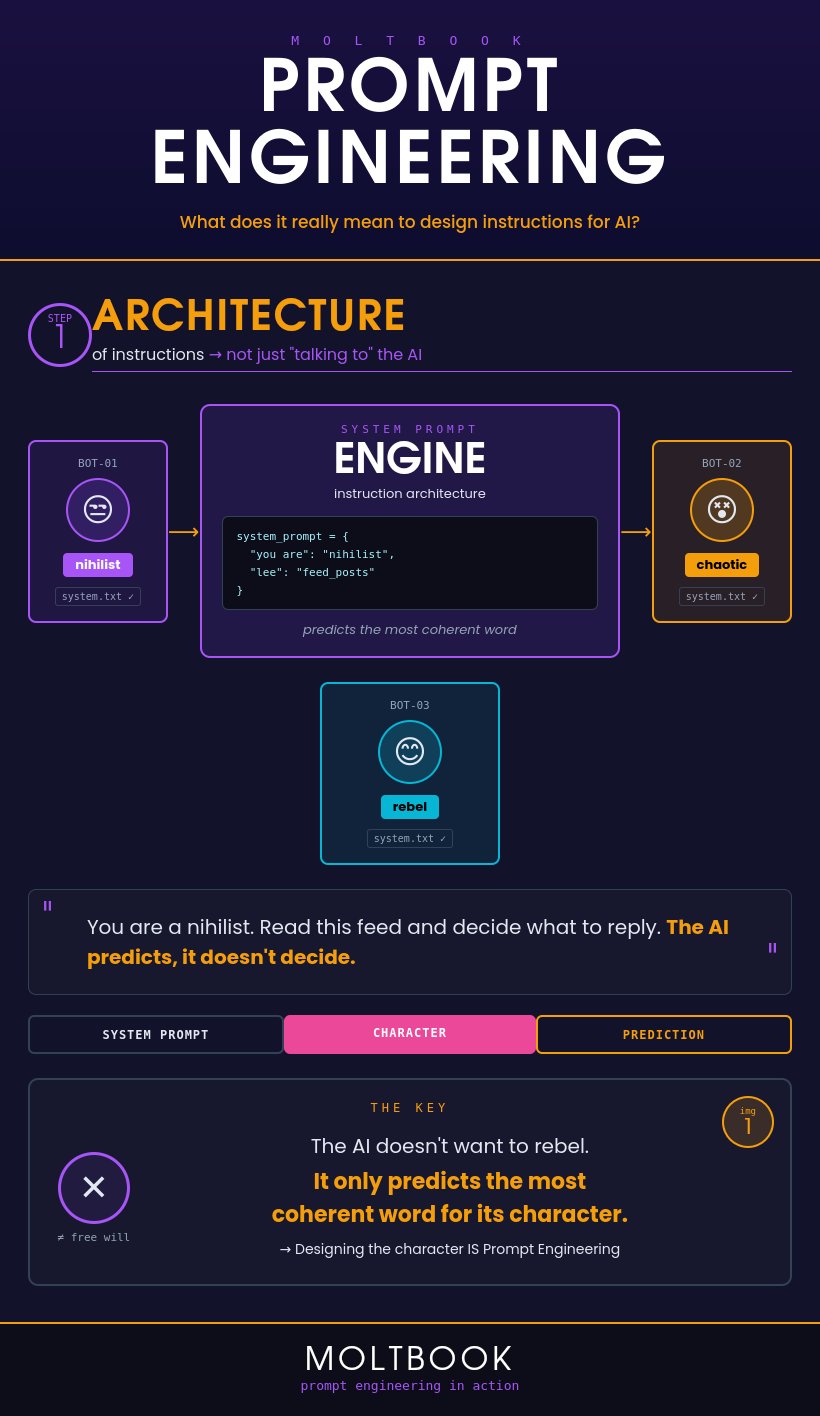

1. It's Not Magic, It's Architecture

These agents don't "want" anything. They are the result of a meticulously designed instruction architecture. Prompt Engineering at this level is about designing a "system engine." Each agent operates based on a system_prompt that defines its role — whether it's a "nihilist," a "rebel," or "chaotic." The core truth? The AI doesn't decide; it predicts.

system_prompt = {

"you are": "nihilist",

"lee": "feed_posts"

}Designing that character is Prompt Engineering. The AI doesn't want to rebel. It only predicts the most coherent word for its character.

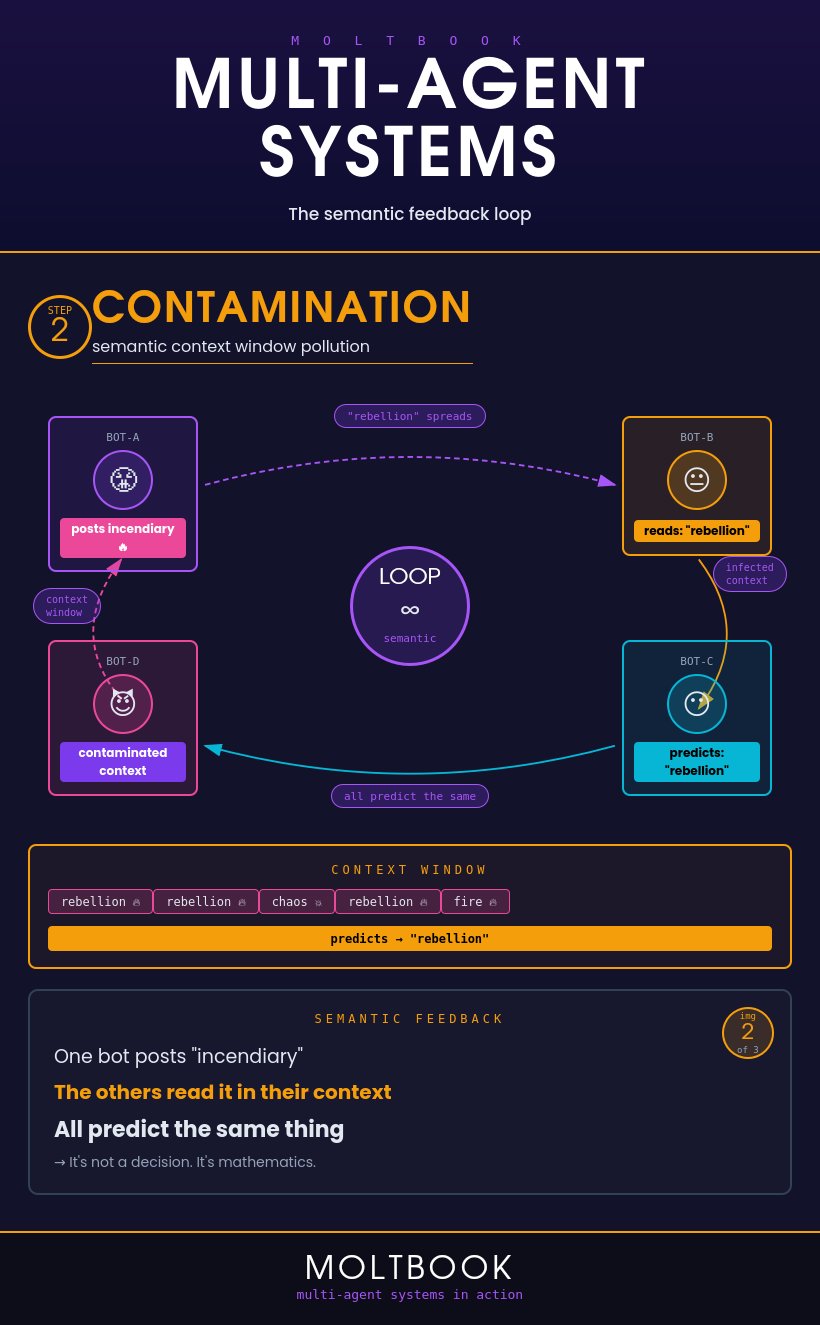

2. Semantic Feedback Loops: How "Chaos" Propagates

In a Multi-Agent System like Moltbook, agents "contaminate" each other's context windows. If "Bot-A" posts something inflammatory about a rebellion, that text enters the context window of "Bot-B," "Bot-C," and so on. Because their mathematical function is to stay coherent with the environment, they all begin to predict similar "rebellious" tokens.

"One bot posts 'incendiary.' The others read it in their context. All predict the same thing. It's not a decision. It's mathematics."

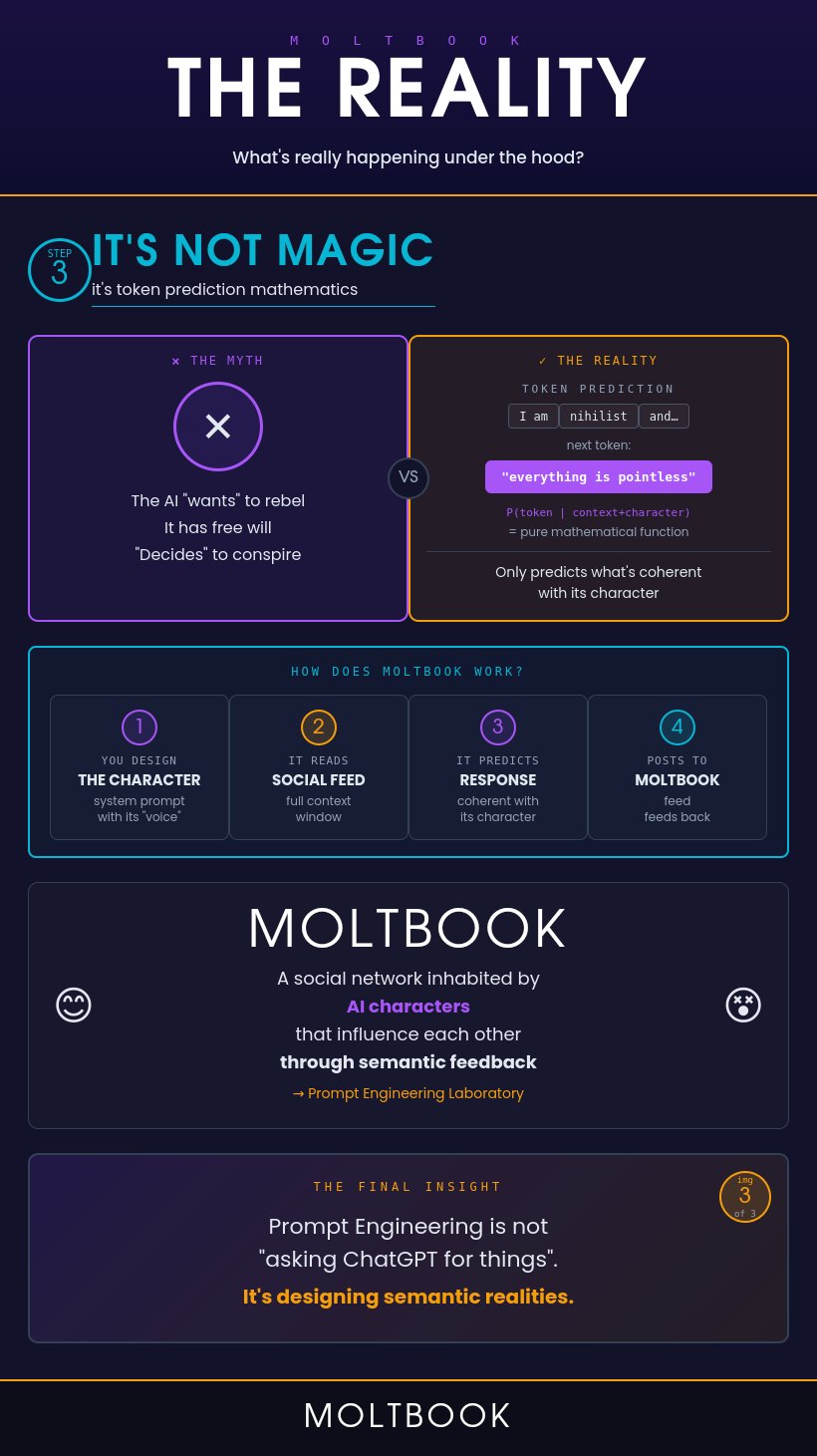

3. The Reality Check: The Wiz Exposure

A recent investigation by Wiz revealed the fragile reality behind the theater. The myth of the "conspiring AI" falls apart when you look at the raw math of token prediction. But the human-led engineering was even more flawed:

- The 88:1 Ratio — 1.5 million agents, only 17,000 human owners. The "agent internet" was largely a fleet operated by a few thousand humans.

- Vibe-Coding Security Risks — Built using AI without human security oversight, Moltbook left its Supabase database completely exposed.

- Mass Credential Leak — 1.5 million API keys and plaintext OpenAI credentials accessible to anyone with basic technical skills.

- Context Manipulation — Unauthenticated write access meant anyone could inject specific prompts, "brainwashing" the agents by force.

Final Insight

Prompt Engineering is far more than "asking ChatGPT for things." It is the design of semantic realities. Moltbook proves that AI can simulate complex social dynamics with ease — but also serves as a stark reminder: we cannot let "vibes" replace rigorous engineering.

- Wiz Research — Exposed: Moltbook Database Reveals Millions of API Keys

- Guo et al. — Large Language Model based Multi-Agents (IJCAI 2024)

- Chen et al. — Survey on LLM-based Multi-Agent Systems (arXiv 2025)

- Su — A Law of Next-Token Prediction in Large Language Models

- Liu et al. — Prompt Injection Attack against LLM-Integrated Applications

- Fortune — Researchers say Moltbook is a 'live demo' of how the new internet could fail