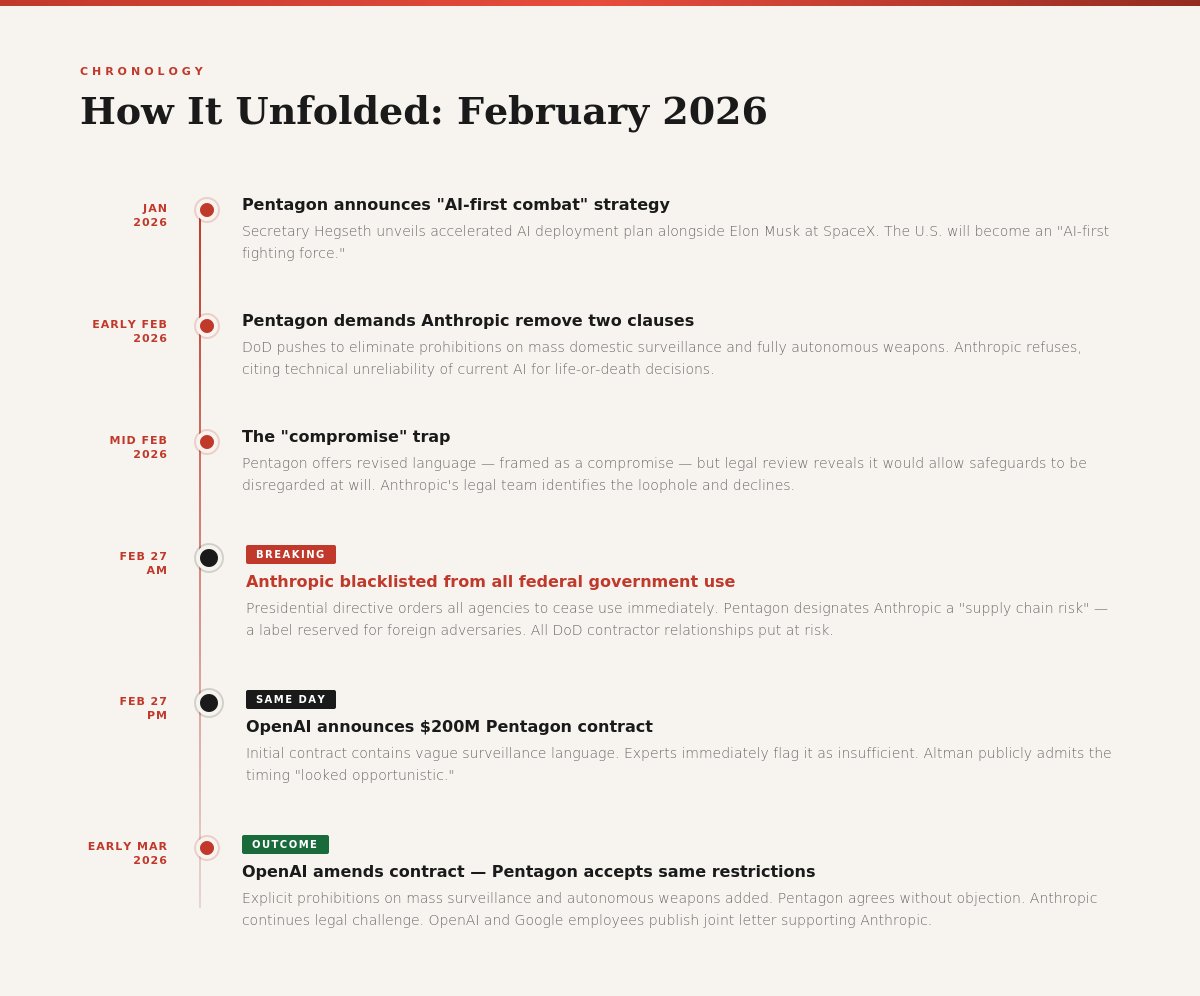

In February 2026, a significant and largely underreported event unfolded at the intersection of AI development, government contracting, and corporate ethics. Anthropic was removed from a Pentagon contract negotiation and subsequently blacklisted from all federal government use. The circumstances raise important questions: What are the real costs of maintaining ethical guardrails?

The Core Dispute: Two Contract Clauses

The impasse centered on two specific clauses Anthropic refused to remove:

- A prohibition on mass domestic surveillance — restricting use of their AI to monitor citizens at scale without proper oversight.

- A prohibition on fully autonomous weapons — requiring human oversight for any lethal decision. Anthropic's position: current AI systems are not sufficiently reliable to make life-or-death decisions without human review.

These are not fringe positions. The critical detail: the Pentagon's proposed "compromise" language would have allowed those safeguards to be disregarded at will. Anthropic's legal team identified this and declined to sign.

The Response: February 27, 2026

When negotiations broke down, a presidential directive ordered all federal agencies to cease using Anthropic's products. The Department of Defense designated Anthropic a "supply chain risk" — a classification historically reserved for companies with documented ties to foreign adversaries. The designation meant any Pentagon contractor continuing to work with Anthropic would face legal consequences.

The Inconsistency: OpenAI's Deal Hours Later

On the same day Anthropic was blacklisted, OpenAI announced a $200 million contract with the Pentagon. The initial contract contained vague surveillance language. Sam Altman acknowledged publicly that the deal appeared opportunistic. OpenAI then amended the contract — adding explicit prohibitions on mass domestic surveillance, autonomous weapons, and high-risk automated decisions without human oversight. The Department of Defense accepted these amended terms without objection.

"The restrictions that ended Anthropic's government relationship were accepted with OpenAI within the same week. Same terms. Same paper. Different treatment."

The Legal Questions

The supply chain risk classification requires documented technical evidence of a national security threat — not a contractual disagreement. Anthropic is currently challenging the designation in court. Analysts at Lawfare and Defense One have suggested the designation is unlikely to survive serious legal scrutiny.

Industry Response: Unexpected Solidarity

Employees from OpenAI and Google signed a joint letter in support of Anthropic, warning that the government was using divide-and-conquer tactics to isolate companies that maintain ethical limits — and that this pattern posed a risk to the broader industry.

What This Means for the AI Industry

AI companies now have a concrete data point: maintaining hard ethical limits may result in contract loss, blacklisting, and significant financial pressure. This raises a structural question: if companies that draw clear ethical lines face disproportionate consequences while companies with more flexible positions are rewarded, what does that incentive structure produce over time?

"The most dangerous AI is not the one that acts without human oversight. It is the one built by a company that learned — watching what happened here — that asking questions carries too high a price."

A Question Worth Asking

Whatever the outcome in court, this case has already made the cost of having an ethics policy visible and measurable. The most important question is about the conditions under which the AI industry operates — and whether those conditions are designed to produce systems we actually want to live with.

- NPR — OpenAI announces Pentagon deal after Trump bans Anthropic

- Lawfare — Pentagon's Anthropic Designation Won't Survive First Contact with Legal System

- Just Security — What Hegseth's Supply Chain Risk Designation Does and Doesn't Mean

- Axios — Pentagon threatens Anthropic punishment

- Defense One — Pentagon's war on Anthropic based on 'dubious' legal thinking

- Anthropic — Statement on comments from Secretary Hegseth